Data Transforms with dbt

In this guide, we show a few examples on how to leverage dbt transformations in Faros Community Edition.

Note: Those transformations should soon be configurable in the corresponding connection Transformation tab in Airbyte, but we need to switch to a more recent version of Airbyte first, which requires work to deal with API changes on the Airbyte side.

Tasks creators transformation

In this example, we'll run a dbt transformation that results in a new table in our Postgres database.

This guide assumes that you have ingested task data. Otherwise, please follow our quickstart.

The transformation code lives in the Faros Community Edition repo.

It computes the number of tasks created per reporter by month/year and ranks the reporters according to the number of tasks created.

Running the dbt transformation

- Export the Faros DB connection details and credentials. Please find the values in the .env file.

export DBT_HOST=<FAROS_DB_HOST in .env>

export DBT_PORT=<FAROS_DB_PORT in .env>

export DBT_USER=<FAROS_DB_USER in .env>

export DBT_PASS=<FAROS_DB_PASSWORD in .env>

export DBT_DBNAME=<FAROS_DB_NAME in .env>- Run the dbt transformation

cd dbt-transforms

dbt runFind the new table in Metabase

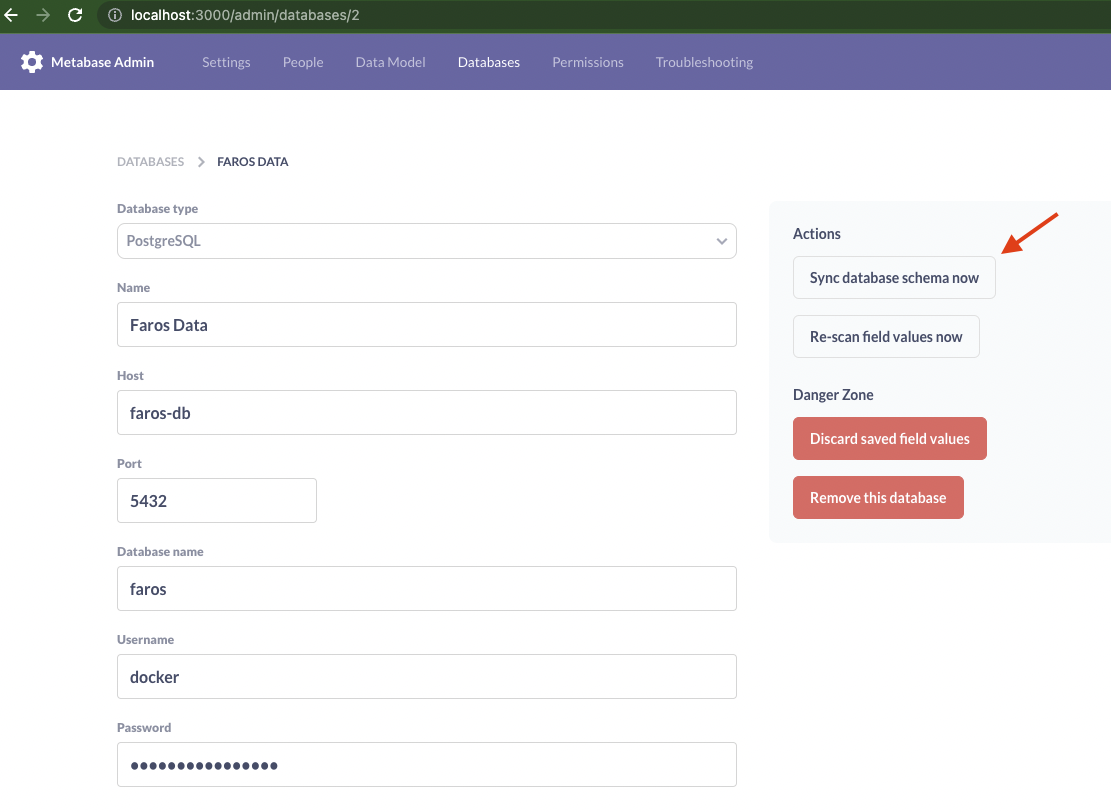

Find the Faros DB in Metabase and sync the database schema

Alternatively, from the repository root, run:

cd init && npm i

node lib/metabase/init --metabase-url http://localhost:3000 \

--username $METABASE_USER \

--password $METABASE_PASSWORD \

--database $FAROS_DB_NAME \

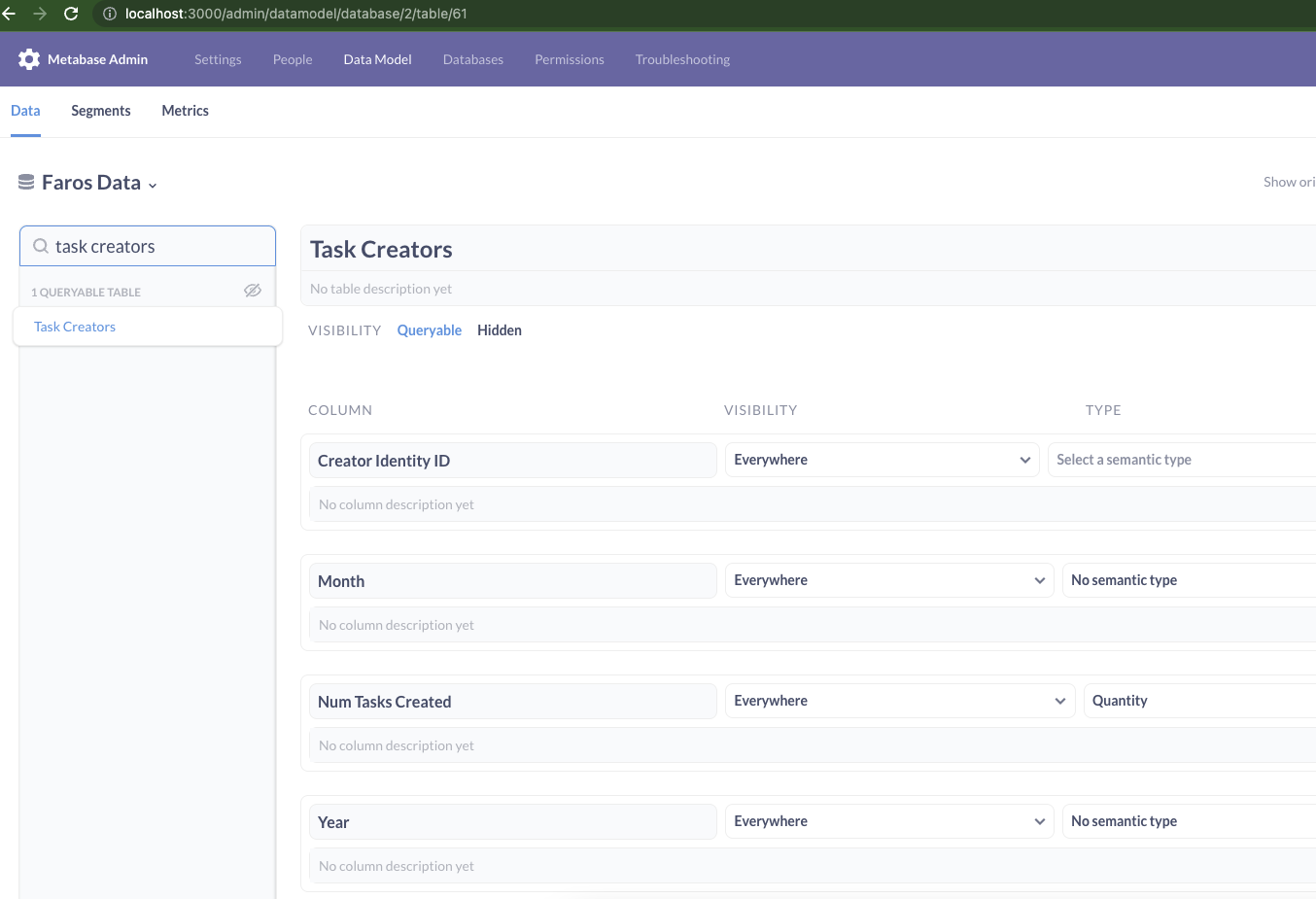

--sync-schemaYou should be able to see the new table in the Data Model tab

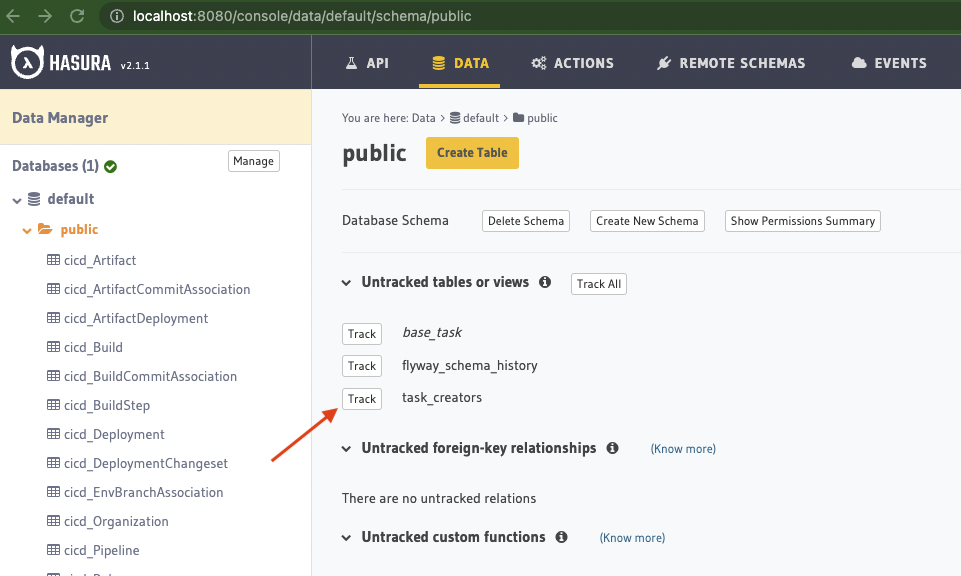

Track the new table in Hasura

Track the new table in Hasura

Alternatively, from the repository root, run:

cd init && npm i

node lib/hasura/init --hasura-url http://localhost:8080 \

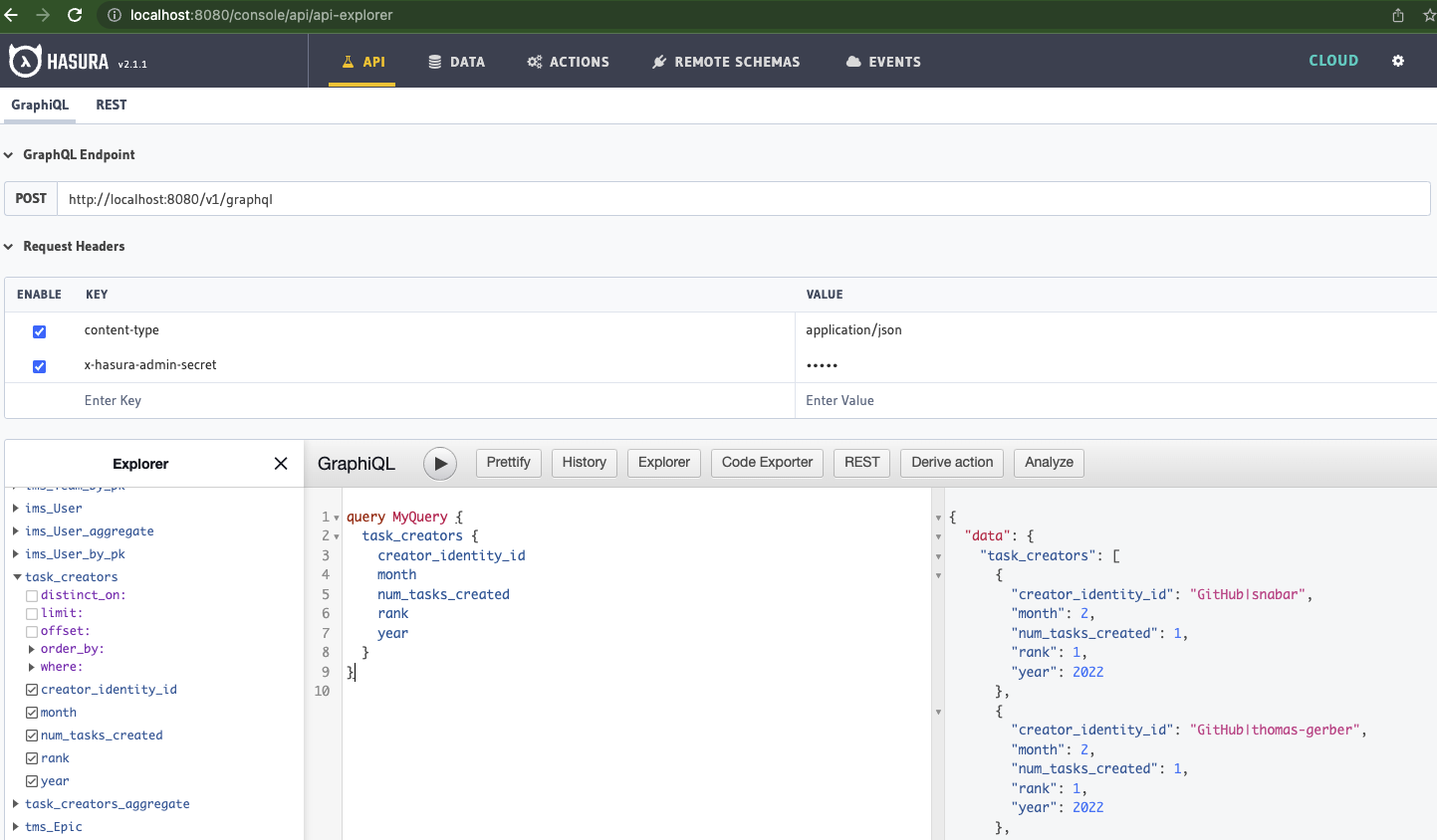

--admin-secret $HASURA_GRAPHQL_ADMIN_SECRETYou should be able to query the new table

Task to incident transformation

In this example, we'll show a dbt transformation that results in new records being added to an existing table in our Postgres database.

This guide assumes that you have ingested task data. Otherwise, please follow our quickstart.

The transformation code lives in the Faros Community Edition repo.

Some engineering organization track incidents in their task management systems (like Jira). Hence, those incidents will be stored as tasks in our canonical schema.

The goal of this transformation is to identify tasks records that are in fact incidents, convert them to incidents and persist them in the incidents table. In this case, we assume tasks with Incident as type_detail to be actually incidents.

Because, unlike the previous example, we do not want to recreate the target table (incidents) when we find new records, we used dbt incremental materialization. We specify a predicate to only consider "fresh" records in the source table. We also enforce an uniqueness constraint on the target table id. If there are new records violating the constraint, they'll be updated (deleted and then inserted into the incidents table).

Finally, we had to work around an issue where Postgres generated columns (e.g., id in our incidents table) were being included in the SQL that dbt generates from our models. We modified their macro to be able to specify a list of columns to ignore when generating the SQL queries for incremental models.

Build to deployment transformation

In this example, we'll show another dbt transformation that results in new records being added to an existing table in our Postgres database.

This guide assumes that you have ingested build data. Otherwise, please follow our quickstart.

The transformation code lives in the Faros Community Edition repo.

Many CI/CD sources cannot distinguish a build pipeline from a deployment one. However, in practice it is possible to distinguish based on pipeline, build or step name.

Similar to the task to incident example, we wish to identify builds that are actually deployments, and persist them in the deployments table. In this case, we consider builds for which the corresponding pipeline name contains deploy to be actually deployments.

Updated about 1 year ago